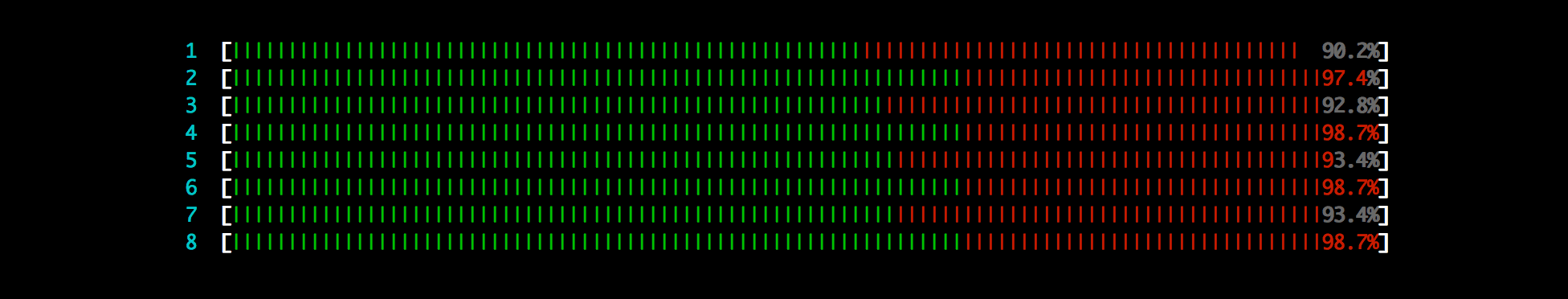

Our brains are really fast at recognizing patterns and forms: we can often find the seasonality of a signal in under a second. It is also possible do this with mathematics using the Fourier transform.

First, we will explain what a Fourier transform is. Next, we will find the seasonality of a website from its Google Analytics pageview report using the R language.

sending...

sending...